Automated Proposal Scoring vs. Traditional Methods Compared

A data-driven breakdown of efficiency gains, accuracy, and ROI for event professionals managing sponsorship portfolios at scale

Discover which proposal evaluation approach fits your event portfolio. This comparison reveals when automated scoring delivers 17-minute turnarounds versus when traditional methods still make sense.

TL;DR

Automated scoring wins on speed - 17 minutes average versus hours or days for manual evaluation, with efficiency gains compounding across larger portfolios

Traditional methods suit small operations - Organizations handling fewer than 30 proposals annually or operating in highly relationship-driven niches may not see positive ROI from automation

65% of teams now use proposal management tools - Rapid adoption reflects the clear efficiency advantages for organizations scaling sponsorship operations

The best approach is hybrid - Use automated systems for routine evaluation and portfolio analytics while preserving human judgment for relationship-critical partnerships

Implementation requires 2-4 months - Plan for data migration, team training, and workflow adjustment before automated systems deliver full efficiency gains

The Sponsorship Efficiency Crossroads

Event managers and conference directors face a critical decision point. You're juggling multiple events, dozens of potential sponsors, and limited hours to evaluate which partnerships deliver real value. The question isn't whether to improve your proposal evaluation process. It's how.

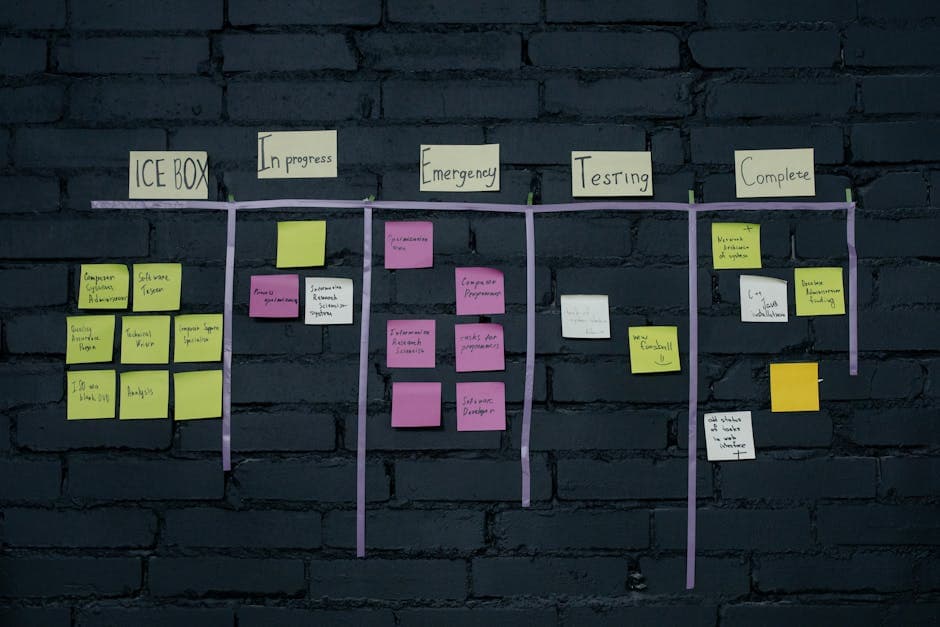

Traditional methods have served the industry for decades. Manual spreadsheets, gut instincts, and relationship-based decisions still dominate many organizations. Meanwhile, automated proposal scoring systems promise faster turnarounds and data-backed decisions.

This comparison examines both approaches through the lens of what matters most to you: scaling operations efficiently while maximizing sponsorship revenue across your portfolio.

Quick Verdict: When Each Approach Wins

Choose automated proposal scoring if you manage three or more events annually, need consistent evaluation criteria across your team, or want to reduce proposal turnaround from days to minutes.

Choose traditional methods if you handle fewer than 10 sponsorship proposals per year, operate in a highly relationship-driven niche where personal judgment outweighs metrics, or lack the budget for software implementation.

For most event professionals managing portfolios at scale, automation delivers measurable efficiency gains that traditional methods simply cannot match.

Criterion | Automated Scoring | Traditional Methods | Winner |

|---|---|---|---|

Speed | 17 minutes average | Hours to days | Automated |

Consistency | Standardized criteria | Variable by evaluator | Automated |

Cost (Initial) | Higher upfront | Lower entry point | Traditional |

Scalability | Handles volume easily | Linear effort increase | Automated |

Relationship Nuance | Data-dependent | Human judgment | Traditional |

Portfolio Analytics | Built-in dashboards | Manual compilation | Automated |

Learning Curve | Training required | Familiar processes | Traditional |

How We Evaluated These Approaches

We assessed both methods across seven dimensions that directly impact event managers scaling sponsorship operations.

Processing Speed measures time from proposal receipt to evaluation completion. For teams handling multiple events, this determines whether you can respond competitively to sponsor inquiries.

Evaluation Consistency examines whether different team members reach similar conclusions using the same criteria. Inconsistency creates portfolio-wide blind spots.

Scalability tests how each approach handles volume increases. Can you double your event portfolio without doubling your evaluation team?

Cost Structure considers both upfront investment and ongoing operational expenses, including hidden costs like staff time.

Analytics Capability assesses the ability to generate portfolio-wide insights, track performance trends, and demonstrate ROI to stakeholders.

Integration Flexibility evaluates how well each approach connects with existing CRM systems, financial tools, and reporting workflows.

Relationship Preservation considers whether the evaluation method maintains the personal connections that make sponsorships valuable.

Head-to-Head Breakdown

Processing Speed: The Efficiency Gap

Automated Scoring: According to recent automation research, average proposal creation and evaluation time has dropped to 17 minutes with modern tools. Automated systems ingest proposal data, apply weighted scoring criteria, and generate preliminary recommendations almost instantly.

The limitation? Complex proposals with non-standard elements may require manual review after initial scoring.

Traditional Methods: Manual evaluation typically requires hours per proposal. Senior staff must read documents, cross-reference past performance, and consult with colleagues. For high-stakes partnerships, this thoroughness adds value.

The limitation? This approach doesn't scale. Each additional proposal demands proportional time investment.

Verdict: Automated scoring wins decisively for organizations processing more than 20 proposals annually. The time savings compound across your portfolio.

Evaluation Consistency: Eliminating Subjective Drift

Automated Scoring:Sponsorship analytics tools apply identical criteria to every proposal. Whether evaluated Monday morning or Friday afternoon, by a junior coordinator or senior director, the scoring remains consistent.

This standardization reveals patterns invisible to manual review, such as which sponsor categories consistently underperform or which proposal elements predict partnership success.

Traditional Methods: Human evaluators bring valuable contextual judgment. They recognize relationship history, industry reputation, and strategic fit that algorithms might miss.

However, research from SkyQuest shows that AI-driven scoring systems using NLP and sentiment analysis now capture much of this nuance while maintaining consistency.

Verdict: Automated scoring wins for portfolio-wide consistency. Traditional methods retain an edge for evaluating unique, high-value partnerships requiring deep contextual understanding.

Scalability: Growing Without Proportional Pain

Automated Scoring:Performance tracking software handles volume increases without additional headcount. Processing 50 proposals costs essentially the same as processing 500 once the system is configured.

This scalability enables event managers to expand their sponsorship pipeline without proportional resource increases.

Traditional Methods: Every additional proposal requires human attention. Scaling from 30 to 100 annual proposals typically demands new hires or consultant support.

The math is straightforward: if manual evaluation takes 3 hours per proposal, 100 proposals consume 300 staff hours annually.

Verdict: Automated scoring wins overwhelmingly. The proposal management software market is projected to reach USD 9.0 billion by 2035 precisely because organizations recognize this scalability advantage.

Cost Structure: Initial Investment vs. Long-Term Value

Automated Scoring: Upfront costs include software licensing, implementation, and training. Annual subscriptions for comprehensive sponsorship analytics tools typically range from several thousand to tens of thousands of dollars depending on feature depth.

However, software solutions now account for 59.6% of the proposal management market, suggesting organizations find the ROI compelling.

Traditional Methods: Lower initial investment, but hidden costs accumulate. Staff time, inconsistent outcomes, and missed opportunities create expenses that don't appear on software invoices.

Calculate your true cost by multiplying hours spent on manual evaluation by fully loaded labor rates.

Verdict: Traditional methods win for small operations with minimal proposal volume. Automated scoring delivers superior value for organizations processing more than 30 proposals annually.

Analytics Capability: Seeing Your Portfolio Clearly

Automated Scoring: Modern performance tracking software generates real-time dashboards showing proposal pipeline status, historical win rates, sponsor category performance, and revenue projections.

Recent data shows average RFP win rates increased from 43% to 45% as organizations adopted smarter, data-driven strategies. Analytics capabilities drive this improvement.

Traditional Methods: Portfolio analytics require manual compilation. Someone must aggregate data from spreadsheets, email threads, and institutional memory into coherent reports.

This process is time-consuming and often incomplete, leaving blind spots in portfolio performance understanding.

Verdict: Automated scoring wins decisively. Real-time portfolio analytics transform sponsorship management from reactive to strategic.

Integration Flexibility: Connecting Your Tech Stack

Automated Scoring: Leading platforms offer API connections to CRM systems, accounting software, and marketing automation tools. Data flows between systems without manual re-entry.

Implementation complexity varies. Some organizations achieve full integration within weeks; others require months of configuration.

Traditional Methods: Manual processes integrate with any system because humans serve as the integration layer. However, this flexibility comes at the cost of efficiency and accuracy.

Data silos persist when information lives in individual spreadsheets and email inboxes.

Verdict: Automated scoring wins for organizations with established tech stacks. Traditional methods may suit organizations with minimal existing software infrastructure.

Relationship Preservation: The Human Element

Automated Scoring: Critics argue that reducing partnerships to scores diminishes relationship value. This concern has merit, but modern systems increasingly incorporate relationship factors as weighted criteria.

The key is using automation to handle routine evaluation while preserving human judgment for relationship-critical decisions.

Traditional Methods: Human evaluators naturally consider relationship history, reputation, and strategic fit. These intangible factors often determine partnership success beyond measurable metrics.

The challenge is ensuring these considerations remain consistent across evaluators and events.

Verdict: Traditional methods retain a slight edge for relationship-intensive sponsorships. However, hybrid approaches that combine automated efficiency with human relationship judgment offer the best of both approaches.

Use Case Mapping: Which Approach Fits Your Situation

If you manage 5+ events annually with shared sponsors, choose automated scoring. Portfolio-wide analytics reveal which sponsors perform across multiple properties and which underdeliver consistently.

If you're building a new sponsorship program from scratch, choose automated scoring. Establishing consistent evaluation criteria from day one prevents the inconsistencies that plague mature programs.

If you operate in a niche where 10 sponsors represent 90% of your revenue, consider traditional methods. Deep relationship management may outweigh efficiency gains when your sponsor universe is small and relationship-intensive.

If your team includes junior staff evaluating proposals, choose automated scoring. Standardized criteria ensure consistent quality regardless of evaluator experience level.

If you're preparing for acquisition or seeking investment, choose automated scoring. Data-driven portfolio analytics demonstrate professionalism and provide the metrics investors expect.

What Both Approaches Get Wrong

Neither automated scoring nor traditional methods solve the fundamental challenge of measuring sponsorship activation quality. Both approaches evaluate proposals based on promised deliverables, not actual execution.

The industry still lacks standardized metrics for comparing sponsorship value across different event types, audience segments, and activation formats. Whether you score proposals manually or automatically, you're working with imperfect predictive data.

Additionally, both approaches struggle with emerging sponsorship formats like hybrid events, influencer partnerships, and community-driven activations that don't fit traditional evaluation frameworks.

Migration and Switching Considerations

Switching from traditional methods to automated scoring requires investment beyond software costs. Plan for data migration (historical sponsor performance, contact relationships, proposal archives), team training, and workflow redesign.

Most organizations report a 2-4 month adjustment period before automated systems deliver full efficiency gains. During this transition, parallel processes may temporarily increase workload.

65% of teams now use RFP software or proposal management tools, up from 48% in 2024. This rapid adoption suggests the switching costs are manageable for most organizations.

Lock-in factors vary by platform. Evaluate data export capabilities before committing. Your sponsor relationships and historical data should remain portable if you later choose to switch providers.

Switching makes sense when manual processes consume more than 10 hours weekly, when your team struggles with evaluation consistency, or when portfolio growth outpaces your capacity to evaluate proposals thoroughly.

The Clear Path Forward

For event managers and conference directors scaling sponsorship operations, automated proposal scoring delivers measurable advantages in speed, consistency, and portfolio analytics. The efficiency gains compound as your event portfolio grows.

Traditional methods remain viable for small operations with minimal proposal volume or highly relationship-driven niches where personal judgment outweighs systematic evaluation.

The most effective approach combines automated efficiency with human relationship judgment. Use performance tracking software to handle routine evaluation and surface insights, then apply human expertise to relationship-critical decisions and strategic partnerships.

With 71% of organizations now regularly using generative AI in at least one business function, the question isn't whether to adopt automation. It's how quickly you can implement it before competitors gain the efficiency advantage.

Frequently Asked Questions

What is portfolio-wide sponsorship management?

Portfolio-wide sponsorship management treats all your events as a unified sponsorship ecosystem rather than isolated properties. This approach uses centralized data and consistent evaluation criteria to identify sponsors who perform well across multiple events, optimize pricing strategies portfolio-wide, and generate consolidated analytics for stakeholder reporting.

How can software improve sponsorship evaluation processes?

Software improves evaluation by applying consistent scoring criteria to every proposal, reducing processing time from hours to minutes, and generating portfolio analytics that reveal performance patterns. Modern platforms also integrate with CRM systems to incorporate relationship history and past sponsor performance into evaluation scores automatically.

When should companies consider using sponsorship management software?

Consider sponsorship management software when manual processes consume more than 10 hours weekly, when your team produces inconsistent evaluations, or when portfolio growth outpaces your capacity to evaluate proposals thoroughly. Organizations managing three or more events annually typically see positive ROI from automation within the first year.

Which features should I look for in a sponsorship management tool?

Prioritize automated proposal scoring with customizable criteria, real-time portfolio dashboards, CRM integration capabilities, and data export functionality. Secondary considerations include mobile accessibility, multi-user collaboration features, and API connections to your existing tech stack. Ensure the platform handles your specific event types and sponsorship formats.

How does the Return On Objectives (ROO) methodology work in sponsorship management?

Return On Objectives measures sponsorship success against specific, predefined goals rather than purely financial metrics. This methodology evaluates whether sponsorships achieved brand awareness targets, audience engagement benchmarks, lead generation goals, or community impact objectives. ROO provides a more comprehensive view of sponsorship value than ROI alone.

What's the typical implementation timeline for automated proposal scoring systems?

Most organizations complete basic implementation within 2-4 weeks, including initial configuration and team training. Full integration with existing CRM systems and workflow optimization typically requires 2-4 months. Plan for a parallel processing period where both manual and automated systems run simultaneously to ensure accuracy before fully transitioning.